System Summary

The Panasas cluster version I’m using is 5.5.1-1098440.68. The Opennms version I’m using is 1.12.9 running on RHEL 6.3. I’ve installed:

net-snmp-utils-5.5-41.el6.x86_64 net-snmp-libs-5.5-41.el6.x86_64 net-snmp-5.5-41.el6.x86_64 jdk-7u67-linux-x64.rpm

Quick steps to adding new system

Once the Opennms system has been configured to monitor Panasas systems follow the steps below:

- Only configure the director blade running the realm service RM President

- Add the Director Blade IP address to:

- PANASAS package in /opt/opennms/etc/collectd-configuration.xml

- SNMPv1 section of /opt/opennms/etc/collectd-configuration.xml

- In the /opt/opennms/etc/poller-configuration.xml file add the first and last IP address of the system’s configured range to:

- An exclude range in the example1 package

- An include range in the panasas package

- Restart the Opennms service

- Add a new node using the Director Blade’s IP address to the Panasas provisioning requisition group.

- Click on the provisioning requisition group’s Synchronize button

Adding SNMP MIBs

One off Steps

To get the Panasas MIBs directly off the system you can get the entire Panasas SNMP MIBS via running the following command from the CLI:

[pancli] collect_logs match /pan/mibs/*

You can then scp them off and extract the resultant archive. Or via the GUI Configuration > SNMP > click on Download MIB definition button

Via the GUI to enable SNMP: Configuration > SNMP Set the community name I used public.

I then used the Opennms MIB compiler: Admin > SNMP MIB Compiler

Uploaded the MIBs and compiled them. The formatting wasn’t perfect for Opennms for the following MIB files:

PANASAS-HW-MIB-V1.mib PANASAS-PERFORMANCE-MIB-V1.mib PANASAS-NOTIFY-MIB-V1.mib

To cut a long story short there were some missing commas, changed the case of LACP to lacp, added a missing import of RFC1213-MIB, removed extra quote marks, removed extra commas and corrected some object references to obsolete OBJECT names, phew!

Once they’d been compiled OK Opennms had created the required data collection group files in /opt/opennms/etc/datacollection

PANASAS-BLADESET-MIB-V1.xml PANASAS-PERFORMANCE-MIB-V1.xml PANASAS-SYSTEM-MIB-V1.xml PANASAS-VOLUMES-MIB-V1.xml

It had also create the snmp graph config files in /opt/opennms/etc/snmp-graph.droperties.d/

PANASAS-BLADESET-MIB-V1-graph.properties PANASAS-PERFORMANCE-MIB-V1-graph.properties PANASAS-SYSTEM-MIB-V1-graph.properties PANASAS-VOLUMES-MIB-V1-graph.properties

Configure Opennms for Panasas data collection

One Off Steps

All the above configuration files created by the MIB compiler will not start collecting anything even after the system has been added. You need to add some more configurations:

- SNMP collection

- Data collection Groups (created by the MIB compiler)

- System Definitions

SNMP collection

Via the GUI go to:

Admin > Manage SNMP Collections and Data Collection Groups > SNMP Collections Tab

Click on Add SNMP Collection button

Enter in a relevant name, Panasas

Set SNMP Storage Flag to select

In the Include Collections click Add and select and add all the relevant Panasas collection groups:

Click Save

This will add the configuration to the /opt/opennms/etc/datacollection-config.xml file

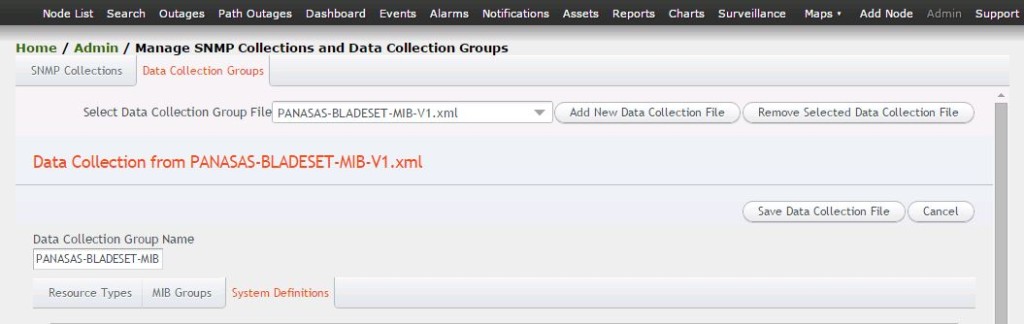

System Definitions

systemDef or System Definition are configurations to the various data collection groups that have been created. No SNMP data will be polled without these added to the data collection groups.

You can do this via the GUI rather than manually adding the systemDef configs to the bottom of the relevant /opt/opennms/etc/datacollection/<whatever>.xml files by hand. Go to:

Admin > Manage SNMP Collections and Data Collection Groups > Data Collection Groups Tab

In the Select Data Collection Group File locate one of the Panasas xml files that was created. Then click on the System Definitions Tab below:

Click on the Add System Definition button.

Enter in a relevant Group Name, this is to relate to the MIB groups within the collection group added below.

The System OID/Mask was the the key part of the entire process. If this isn’t right nothing will work. For all of the Panasas monitoring I used a Mask based on the System OID. To get this info I used the following command taken from the SNMP Reports How-to:

snmpget -v2c -On -c <community> <host> sysObjectID.0

For example:

[root@vinz opennms]# snmpget -v 1 -On -c public 10.7.18.16 sysObjectID.0 .1.3.6.1.2.1.1.2.0 = OID: .1.3.6.1.4.1.10159.1.3.0 [root@vinz opennms]#

Initially you would take the entire OID returned with a trailing full stop, to test that it works run the following (I pipe to more so I can Ctrl+C out if it works):

snmpwalk -v 1 -c public 10.7.18.16 .1.3.6.1.4.1.10159.1.3.0 | more

This didn’t work but dropping the last number did:

[root@vinz opennms]# snmpwalk -v 1 -c public 10.7.18.16 .1.3.6.1.4.1.10159.1.3.0 | more [root@vinz opennms]# snmpwalk -v 1 -c public 10.7.18.16 .1.3.6.1.4.1.10159.1.3 | more SNMPv2-SMI::enterprises.10159.1.3.1.1.0 = INTEGER: 100 SNMPv2-SMI::enterprises.10159.1.3.1.2.1.1.1 = INTEGER: 1 SNMPv2-SMI::enterprises.10159.1.3.1.2.1.1.2 = INTEGER: 2 <SNIP>

So use the following for the mask = .1.3.6.1.4.1.10159.1.3.

UPDATE using the above mask can miss lots of OIDs that could be useful. For example the OID for the human readable name for a blade contains .1.3.6.1.4.1.10159.1.2. so would never be captured. The recommended mask is therefore .1.3.6.1.4.1.10159.1.

In the MIB Groups pull down list choose the required MIB group and then click on Add Group

Click Save

This will then add the follwoing to the data collection group’s xml file:

<systemDef name="Blade Set"> <sysoidMask>.1.3.6.1.4.1.10159.1.3.</sysoidMask> <collect> <includeGroup>panBSetTable</includeGroup> </collect> </systemDef> </datacollection-group>

Repeat this for all required data collection groups and MIB groups.

Configuring the collection service

The following section configures Opennms’ collectd service to use the newly created SNMP collection and it’s collection groups and against what. This config within the /opt/opennms/etc/collectd-configuration.xml needs to be updated whenever a new Panasas system is added. Below is the configuration with two systems, panasas01 and panasas-ota:

<package name="PANASAS"> <filter>IPADDR != '0.0.0.0'</filter> <specific>10.7.18.16</specific> <specific>10.10.4.2</specific> <service name="SNMP" interval="300000" user-defined="false" status="on"> <parameter key="collection" value="Panasas"/> </service> </package>

Add Panasas System to Opennms SNMP config

Steps Needed Per Panasas System

Panasas only supports SNMP v1 so you’ll need to add the following configuration to the /opt/opennms/etc/snmp-config.xml file:

<definition version="v1" retry="3"> <specific>10.7.18.16</specific> <specific>10.10.4.2</specific> </definition>

Panasas Poller Package

The file /opt/opennms/etc/poller-configuration.xml handles the services that Opennms will monitor. Out of the box there is a default package, called example1, which encompasses all possible IP address’. This default package though will create some annoying polling service outages which aren’t required.

The customisations I did to this file were to add an exclusion entry in the default package for the Panasas’ entire IP range:

<package name="example1"> <filter>IPADDR != '0.0.0.0'</filter> <exclude-range begin="10.7.18.16" end="10.7.18.250" /> <include-range begin="1.1.1.1" end="254.254.254.254" />

Then created a new panasas entry pretty much copied from example1 but only including the relevant services: ICMP, SNMP and SSH

<package name="panasas"> <filter>IPADDR != '0.0.0.0'</filter> <include-range begin="10.7.18.16" end="10.7.18.250" /> <rrd step="300"> <rra>RRA:AVERAGE:0.5:1:2016</rra> <rra>RRA:AVERAGE:0.5:12:1488</rra> <rra>RRA:AVERAGE:0.5:288:366</rra> <rra>RRA:MAX:0.5:288:366</rra> <rra>RRA:MIN:0.5:288:366</rra> </rrd> <service name="ICMP" interval="300000" user-defined="false" status="on"> <parameter key="retry" value="2" /> <parameter key="timeout" value="3000" /> <parameter key="rrd-repository" value="/opt/opennms/share/rrd/response" /> <parameter key="rrd-base-name" value="icmp" /> <parameter key="ds-name" value="icmp" /> </service> <service name="SNMP" interval="300000" user-defined="false" status="on"> <parameter key="oid" value=".1.3.6.1.2.1.1.2.0" /> </service> <service name="SSH" interval="300000" user-defined="false" status="on"> <parameter key="retry" value="1" /> <parameter key="banner" value="SSH" /> <parameter key="port" value="22" /> <parameter key="timeout" value="3000" /> <parameter key="rrd-repository" value="/opt/opennms/share/rrd/response" /> <parameter key="rrd-base-name" value="ssh" /> <parameter key="ds-name" value="ssh" /> </service> <downtime interval="30000" begin="0" end="300000" /><!-- 30s, 0, 5m --> <downtime interval="300000" begin="300000" end="43200000" /><!-- 5m, 5m, 12h --> <downtime interval="600000" begin="43200000" end="432000000" /><!-- 10m, 12h, 5d --> <downtime begin="432000000" delete="true" /><!-- anything after 5 days delete --> </package>

This needs to be updated with every new system

Adding the Panasas System as a node

Create a Provisioning Requisition entry, this are new names for provisioning groups, via Admin > Manage Provisioning Requisitions

In the text entry field enter in a relevant name then click on Add New Requisition button.

Click on Edit to the right of Requisition

Click on Add Node

Enter in a descriptive name in Node

Click Save

Click on the Add Interface link

Enter in the relevant IP address and description, say dir blade, leave Status and SNMP Primary as is the click Save

Click on Add Service link and add the following services

- SSH

- SNMP

- ICMP

Click on Done

The system will be added and data collection and graphing will begin. You can speed this up by clicking on the Synchronize button.

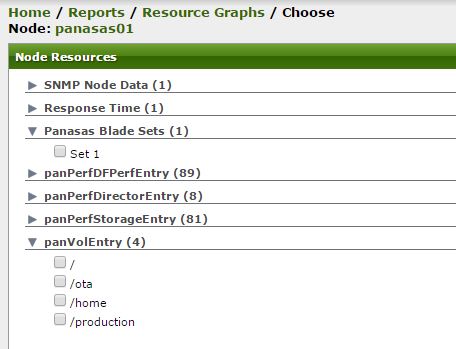

Human Readable Resource Graph Entries

Out of the box the system will list each Panasas node resource graph groups by the contents of the label section of the resourceType section in the corresponding data collection xml document. Each instance within said resource group, say a blade or a blade set, will be listed by what is returned by the resourceLabel part of the resourceType section.

What is then shown on the web page might not be as identifying as liked. For example the blade set configuration, by default the resource graph group is listed as panBSetEntry and any instances, i.e. bladesets, are listed as OIDs:

<resourceType name="panBSetEntry" label="panBSetEntry" resourceLabel="${index}">

This is not very user friendly, and it’s even worse for per blade resource graphs.

To improve on this the key component, resourceLabel is changed, using the Opennms wiki page as reference, to ${string(index)}. This returns for Bladeset and Volume resource groups a satisfactory and mappable list.

<resourceType name="panBSetEntry" label="Panasas Blade Sets" resourceLabel="${string(index)}">

<resourceType name="panVolEntry" label="panVolEntry" resourceLabel="${string(index)}">

This hasn’t worked for the actual blades as this returns a slightly more usable but still improvable Hardware serial number.

For example for the Director Blades, the resourceType is panPerfDirectorEntry. If we look at that entry in the corresponding MIB file we see the INDEX is set to the hardware serial number:

panPerfDirectorEntry OBJECT-TYPE

SYNTAX PanPerfDirectorEntry

MAX-ACCESS not-accessible

STATUS current

DESCRIPTION

"A row in panPerfDirectorTable."

INDEX { panHwNodeHwSN }

::= { panPerfDirectorTable 1 }

Updating the INDEX entry to:

INDEX { panHwNodeHwSN, panHwNodeName }

Then also updating the IMPORTS section from:

IMPORTS <SNIP> panHwNodeHwSN FROM PANASAS-HW-MIB-V1

To:

IMPORTS <SNIP> panHwNodeHwSN, panHwNodeName FROM PANASAS-HW-MIB-V1

Then edit the refering MIB PANASAS-HW-MIB-V1 and make sure panHwNodeName is set as an index. In the organisation part at the top, in this example it’s part of the panHwNode > panHwNodeTable > panHwNodeEntry section:

panHwNodeName [Index]

And then again in the definition area where the index is specified, in this example panHwNodeEntry:

panHwNodeEntry OBJECT-TYPE

SYNTAX PanHWNodeEntry

MAX-ACCESS not-accessible

STATUS current

DESCRIPTION

"An entry in panHwNodeTable."

INDEX { panHwNodeHwSN, panHwNodeName }

::= { panHwNodeTable 1 }

Via the GUI go into MIB compiler, right click on the compiled MIB and choose Generate Data Collection. This will OVERWRITE the current data collection XML file currently in /opt/opennms/etc/datacollection/ so if you have customisations backup the file first.

You will then have to recreate within the GUI the Data collection group and any defined System Definitions related to the MIB.

You do not have to over write your graphs and loose any customisations, nor do you have to restart OpenNMS for this to take effect.

I’VE STILL NOT GOT BLADES LISTED BY THEIR NAME

Customised Graphs

I wanted to groups together some of the monitored metrics otherwise each page would be filled with lots of single graphs. So for example I combined the following storage blade metrics:

- Total Disk Capacity

- Used Disk Capacity

- Available Disk Capacity

- Reserved Disk Capacity

I commented out all the individual sections in the /opt/opennms/etc/snmp-graph.properties.d/PANASAS-PERFORMANCE-MIB-V1-graph.properties file and created a new section:

reports=panPerfDirectorTable.panPerfDirectorCpuUtil, \ <SNIP> panPerfStorageTable.StorageBladeCapacity, \ <SNIP>

report.panPerfStorageTable.StorageBladeCapacity.name=Capacity info in Giga Bytes (GB).

report.panPerfStorageTable.StorageBladeCapacity.columns=panPerfStoragCapTot,panPerfStoraCapUsed,panPerfStorCapAvail,panPerfStorCapReser

report.panPerfStorageTable.StorageBladeCapacity.type=panPerfStorageEntry

report.panPerfStorageTable.StorageBladeCapacity.description=Capacity info in Giga Bytes

report.panPerfStorageTable.StorageBladeCapacity.command=--title="Capacity info in Giga Bytes (GB)" \

DEF:totalcap={rrd1}:panPerfStoragCapTot:AVERAGE \

DEF:usedcap={rrd2}:panPerfStoraCapUsed:AVERAGE \

DEF:availcap={rrd3}:panPerfStorCapAvail:AVERAGE \

DEF:resvcap={rrd4}:panPerfStorCapReser:AVERAGE \

LINE1:totalcap#ff0000:"Total disk capacity" \

GPRINT:totalcap:AVERAGE:"Avg\\: %8.2lf %s" \

GPRINT:totalcap:MIN:"Min\\: %8.2lf %s" \

GPRINT:totalcap:MAX:"Max\\: %8.2lf %s\n" \

LINE1:usedcap#0000ff:"Used disk capacity" \

GPRINT:usedcap:AVERAGE:"Avg\\: %8.2lf %s" \

GPRINT:usedcap:MIN:"Min\\: %8.2lf %s" \

GPRINT:usedcap:MAX:"Max\\: %8.2lf %s\n" \

LINE1:availcap#00ff00:"Available disk capacity" \

GPRINT:availcap:AVERAGE:"Avg\\: %8.2lf %s" \

GPRINT:availcap:MIN:"Min\\: %8.2lf %s" \

GPRINT:availcap:MAX:"Max\\: %8.2lf %s\n" \

LINE1:resvcap#ffff00:"Reserved disk capacity" \

GPRINT:resvcap:AVERAGE:"Avg\\: %8.2lf %s" \

GPRINT:resvcap:MIN:"Min\\: %8.2lf %s" \

GPRINT:resvcap:MAX:"Max\\: %8.2lf %s\n"

Then restarted the Opennms services.

While OpenNMS looks powerful, it is the most complicated one when it comes to configuration.

I’ve never dealt with SNMP before, but i got a device from a company called Packet Power (power monitoring) that has SNMP interface, i was able to successfully poll custom data from the device through the vendor’s MIB using PRTG network monitor, the interface is so easy.

With open NMS, there are so many confusing things, stuff you can do multiple ways, here is my problem:

How on Earth i am going to scan/poll/obtain snmp data from a device with a custom MIB ? here is what i’ve done so far:

1. upload MIB,compiled with no errors

2. generate data collection, with system definitions for kind of a device within the MIB.

3. added snmp interface (Home =>Admin=> Configure SNMP by IP)

4. added prequesition (Home =>Admin Provisioning=> Requisitions)

the node appears on node info menu, with snmp attributes polled right, but i don’t know how to tell that node to scan points from that MIB or data collection configured

any idea ?

cheers

Found any solution? I’m in the same situation as you at the time of this comment.

At work we’ve moved away from Panasas and I soon stopped using OpenNMS after the blog post as it was taking too much effort and I couldn’t fix what I wanted to, so sorry can’t help you.